This is a guide on how to set up OpenFaaS on Google Kubernetes Engine (GKE) with a cost-effective, auto-scaling, multi-stage deployment.

Experience level:

intermediate OpenFaaS / intermediate Kubernetes & GKE

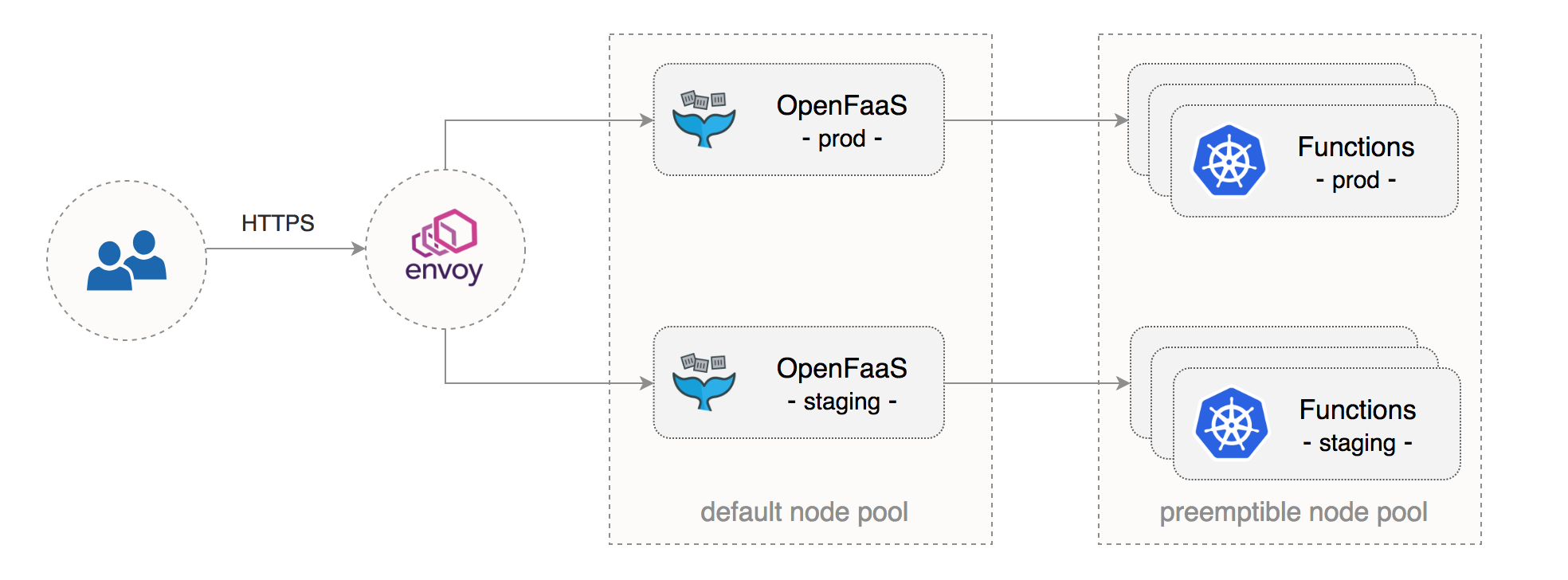

At the end of this guide you will be running two OpenFaaS environments on the same GKE cluster with the following characteristics:

- a dedicated GKE node pool for OpenFaaS core services

- a dedicated node pool made out of preemptible VMs for OpenFaaS functions

- autoscaling for functions and their underlying infrastructure

- isolated staging and production environments with network policies

- secure OpenFaaS ingress with Let’s Encrypt TLS and authentication

This setup can enable multiple teams to share the same Continuous Delivery (CD) pipeline with staging/production environments hosted on GKE and development taking place on a local environment such as Minikube or Docker for Mac.

GKE cluster setup

We will be creating a cluster on Google’s Kubernetes Engine (GKE), if you don’t have an account you can sign up here for free credit.

Create a cluster with two nodes and network policy enabled:

zone=europe-west3-a

k8s_version=$(gcloud container get-server-config --zone=${zone} --format=json \

| jq -r '.validNodeVersions[0]')

gcloud container clusters create openfaas \

--cluster-version=${k8s_version} \

--zone=${zone} \

--num-nodes=2 \

--machine-type=n1-standard-1 \

--no-enable-cloud-logging \

--disk-size=30 \

--enable-autorepair \

--enable-network-policy \

--scopes=gke-default,compute-rw,storage-rw

The above command will create a node pool named default-pool consisting of n1-standard-1 (vCPU: 1, RAM 3.75GB, DISK: 30GB) VMs.

You will use the default pool to run the following OpenFaaS services:

- API Gateway and Kubernetes Operator

- Async services (NATS streaming and queue worker)

- Monitoring and Autoscaling services (Prometheus and Alertmanager)

Create a node pool of n1-highcpu-4 (vCPU: 4, RAM 3.60GB, DISK: 30GB) preemptible VMs with autoscaling enabled:

gcloud container node-pools create fn-pool \

--cluster=openfaas \

--preemptible \

--node-version=${k8s_version} \

--zone=${zone} \

--num-nodes=1 \

--enable-autoscaling --min-nodes=2 --max-nodes=4 \

--machine-type=n1-highcpu-4 \

--disk-size=30 \

--enable-autorepair \

--scopes=gke-default

Preemptible VMs are up to 80% cheaper than regular instances and are terminated and replaced after a maximum of 24 hours.

In order to avoid all nodes being replaced at the same time, wait for 30 minutes and scale up the function pool to two nodes. Open a new terminal and run the scale up command:

sleep 30m && gcloud container clusters resize openfaas \

--size=2 \

--node-pool=fn-pool \

--zone=${zone}

Now let that command run in the background and carry on with the next step.

GKE provides an audit-log of all key events from your cluster. Use the following command to see the logs of when each VM is preempted:

gcloud compute operations list | grep compute.instances.preempted

The cluster above along with a GCP load balancer forwarding rule and a 30GB ingress traffic per month will cost the following::

| Role | Type | Usage | Price per month |

|---|---|---|---|

| 2 x OpenFaaS Core Services | n1-standard-1 | 1460 total hours per month | $62.55 |

| 2 x OpenFaaS Functions | n1-highcpu-4 | 1460 total hours per month | $53.44 |

| Persistent disk | Storage | 120 GB | $5.76 |

| Container Registry | Cloud Storage | 300 GB | $6.90 |

| Forwarding rules | Forwarding rules | 1 | $21.90 |

| Load Balancer ingress | Ingress | 30 GB | $0.30 |

| Total | $150.84 |

The cost estimation for a total of 10 vCPU and 22GB RAM was generated with the Google Cloud pricing calculator on 31 July 2018 and could change any time.

GKE TLS Ingress setup

When exposing OpenFaaS on the Internet, it is recommended to enable HTTPS so that all traffic to the API gateway is encrypted. To do that you’ll need the following tools:

- Heptio Contour as Kubernetes Ingress controller (or another ingress controller such as Nginx)

- JetStack cert-manager as Let’s Encrypt provider

Set up credentials for kubectl:

gcloud container clusters get-credentials openfaas -z=${zone}

Create a cluster admin role binding:

kubectl create clusterrolebinding "cluster-admin-$(whoami)" \

--clusterrole=cluster-admin \

--user="$(gcloud config get-value core/account)"

Install Helm CLI with Homebrew:

brew install kubernetes-helm

Create a service account and a cluster role binding for Tiller:

kubectl -n kube-system create sa tiller

kubectl create clusterrolebinding tiller-cluster-rule \

--clusterrole=cluster-admin \

--serviceaccount=kube-system:tiller

Deploy Tiller in the kube-system namespace:

helm init --skip-refresh --upgrade --service-account tiller

Heptio Contour is an ingress controller based on Envoy reverse proxy that supports dynamic configuration updates. Install Contour with:

kubectl apply -f https://j.hept.io/contour-deployment-rbac

Find the Contour address with:

kubectl -n heptio-contour describe svc/contour | grep Ingress | awk '{ print $NF }'

Go to your DNS provider and create an A record for each OpenFaaS instance:

host openfaas.example.com

openfaas.example.com has address 35.197.248.216

host openfaas-stg.example.com

openfaas-stg.example.com has address 35.197.248.217

Install cert-manager with Helm:

helm install --name cert-manager \

--namespace kube-system \

stable/cert-manager

Create a cluster issuer definition (replace email@example.com with a valid email address):

Save the above resource as letsencrypt-issuer.yaml and then apply it:

kubectl apply -f ./letsencrypt-issuer.yaml

Network policies setup

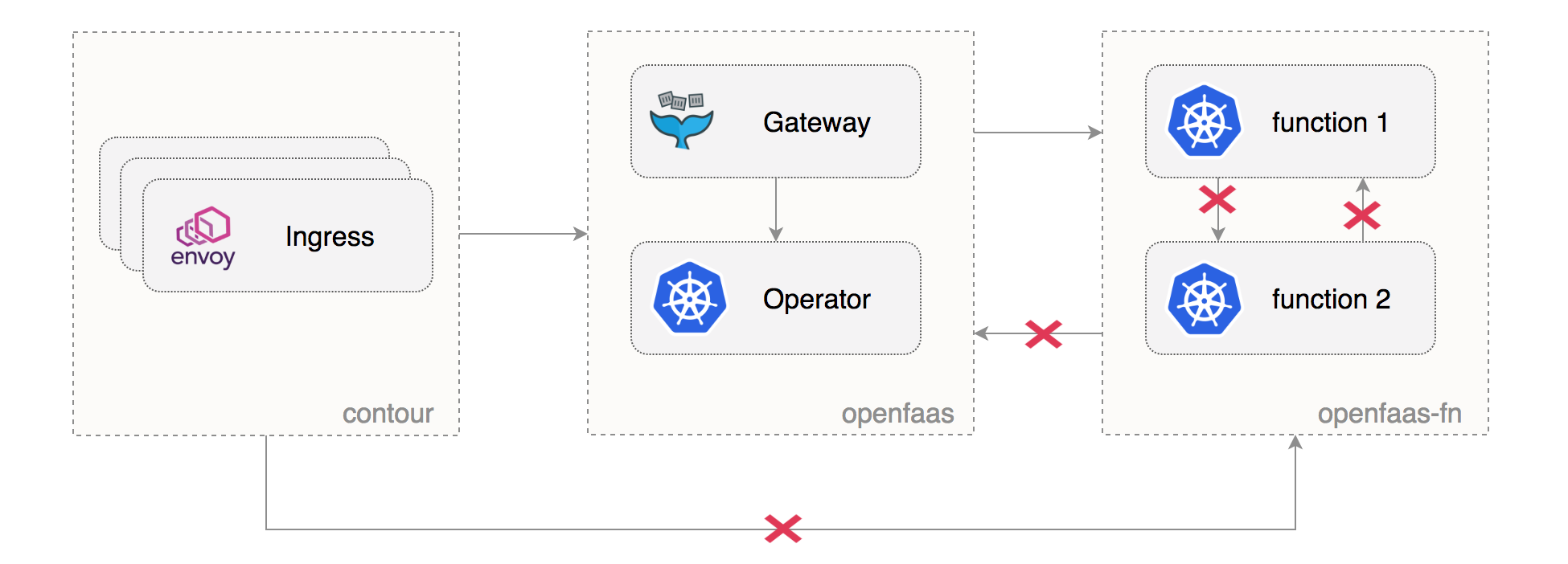

An OpenFaaS instance is composed out of two namespaces: one for the core services and one for functions. Kubernetes namespaces alone offer only a logical separation between workloads. To enforce network segregation we need to apply access role labels to the namespaces and to create network policies.

Create the OpenFaaS staging and production namespaces with the access role labels:

Save the above resource as openfaas-ns.yaml and then apply it:

kubectl apply -f ./openfaas-ns.yaml

Allow ingress traffic from the heptio-contour namespace to both OpenFaaS environments:

kubectl label namespace heptio-contour access=openfaas-system

Create network policies to isolate the OpenFaaS core services from the function namespaces:

kubectl apply -f https://gist.githubusercontent.com/stefanprodan/fc8d7ef8f4af3d0d81a9f28ff8c6edcb/raw/9ad1f60a5b6b5d474ac1551b39779824c32f3251/network-policies.yaml

Note that the above configuration will prohibit functions from calling each other or from reaching the OpenFaaS core services.

OpenFaaS staging setup

Generate a random password and create an OpenFaaS credentials secret:

stg_password=$(head -c 12 /dev/urandom | shasum | cut -d' ' -f1)

kubectl -n openfaas-stg create secret generic basic-auth \

--from-literal=basic-auth-user=admin \

--from-literal=basic-auth-password=$stg_password

Create the staging configuration (replace example.com with your own domain):

The OpenFaaS core services will be running on the default pool

because you’ve set the affinity constraint to cloud.google.com/gke-nodepool=default-pool.

Save the above file as openfaas-stg.yaml and install the OpenFaaS staging instance from the project helm repository:

helm repo add openfaas https://openfaas.github.io/faas-netes/

helm upgrade openfaas-stg --install openfaas/openfaas \

--namespace openfaas-stg \

-f openfaas-stg.yaml

In a couple of seconds cert-manager should fetch a certificate from LE:

kubectl -n kube-system logs deployment/cert-manager

Certificate issued successfully

OpenFaaS production setup

Generate a random password and create the basic-auth secret in the openfaas-prod namespace:

password=$(head -c 12 /dev/urandom | shasum | cut -d' ' -f1)

kubectl -n openfaas-prod create secret generic basic-auth \

--from-literal=basic-auth-user=admin \

--from-literal=basic-auth-password=$password

Create the production configuration (replace example.com with your own domain):

For the production deployment the OpenFaaS gateway has high-availability through two replicas.

We make sure those replicas are scheduled on different nodes through the use of a pod anti-affinity rule.

Note that operator.createCRD is set to false since the functions.openfaas.com custom resource definition is already present on the cluster.

Save the above file as openfaas-prod.yaml and install the OpenFaaS production instance with helm:

helm upgrade openfaas-prod --install openfaas/openfaas \

--namespace openfaas-prod \

-f openfaas-prod.yaml

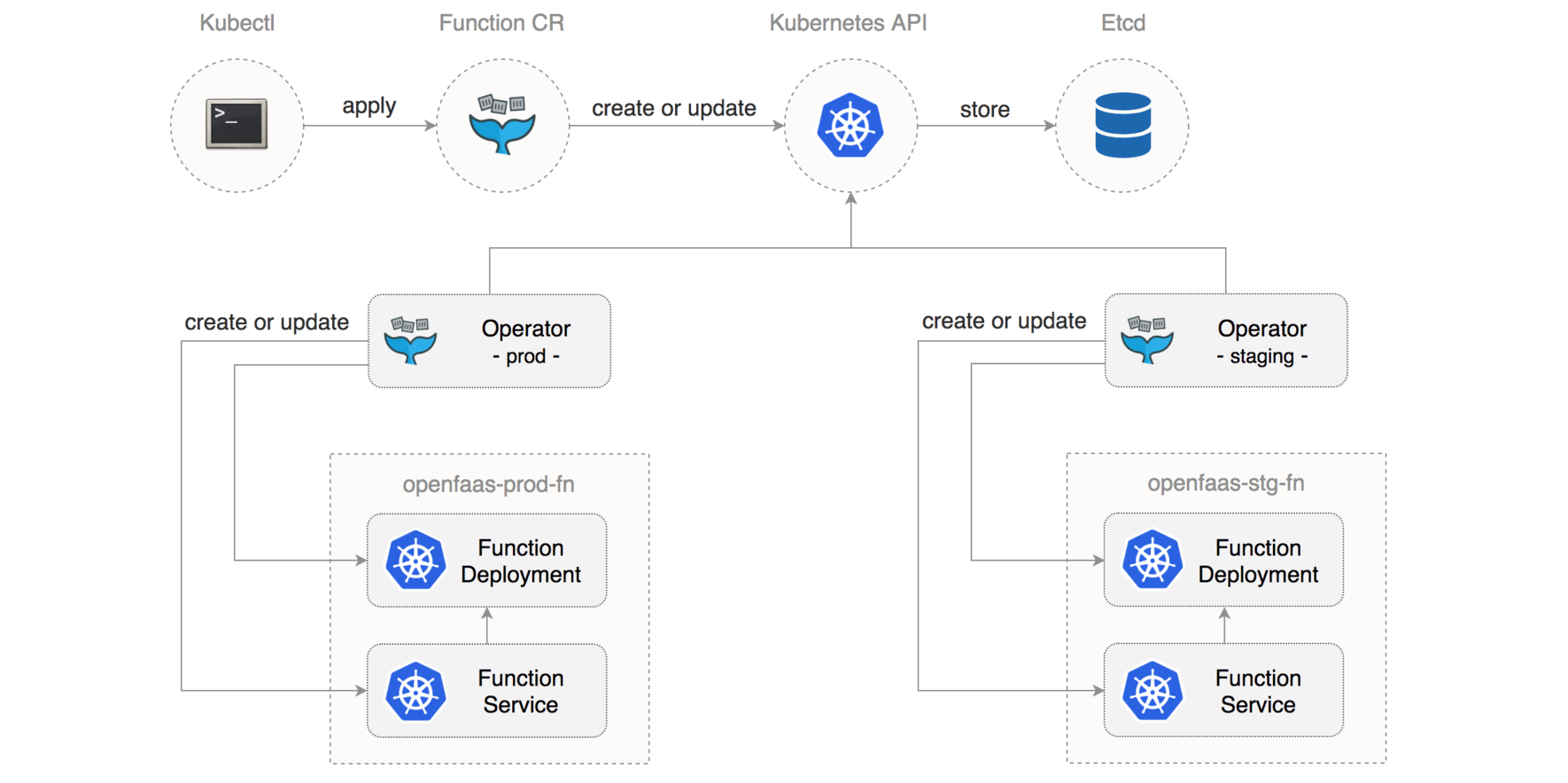

Manage your functions with kubectl

Using the OpenFaaS CRD you can define functions as Kubernetes custom resources.

Create certinfo.yaml with the following content:

In oder to make use of the preemptible node pool you need to

set the affinity constraint to cloud.google.com/gke-preemptible=true so that certinfo will be deployed on the fn-pool.

Use kubectl to deploy the function to both environments by changing the namespace parameter:

kubectl -n openfaas-stg-fn apply -f certinfo.yaml

kubectl -n openfaas-prod-fn apply -f certinfo.yaml

The certinfo function will tell you the SSL information for your domain. User certinfo to verify both endpoints are live:

curl -d "openfaas-stg.example.com" https://openfaas-stg.example.com/function/certinfo

Issuer Let's Encrypt Authority X3

....

curl -d "openfaas.example.com" https://openfaas.example.com/function/certinfo

Issuer Let's Encrypt Authority X3

....

You can get the list of functions running in a namespace:

kubectl -n openfaas-stg-fn get functions

And you can delete a function from an namespace with:

kubectl -n openfaas-stg-fn delete function certinfo

Developer workflow

When it comes to the development workflow you will be using OpenfaaS CLI.

Install faas-cli and login to the staging instance with:

curl -sL https://cli.openfaas.com | sudo sh

echo $stg_password | faas-cli login -u admin --password-stdin \

-g https://openfaas-stg.example.com

Using faas-cli, Git and kubectl a development workflow would look like this:

1. Create a function using the Go template

faas-cli new myfn --lang go --prefix gcr.io/gcp-project-id

2. Implement your function logic by editing the myfn/handler.go file

3. Build the function as a Docker image

faas-cli build -f myfn.yml

4. Test the function on your local cluster (click here if you haven’t set up your local environment yet)

faas-cli deploy -f myfn.yml -g 127.0.0.1:31112

5. Initialize a Git repository for your function and commit your changes

git init

git add . && git commit -s -m "Initial function version"

6. Rebuild the image by tagging it with the Git commit short SHA

faas-cli build --tag sha -f myfn.yml

7. Push the image to GCP Container Registry

faas-cli push --tag sha -f myfn.yml

8. Generate the function Kubernetes custom resource

faas-cli generate -n "" --tag sha --yaml myfn.yml > myfn-k8s.yaml

9. Add the preemptible constraint to myfn-k8s.yaml

constraints:

- "cloud.google.com/gke-preemptible=true"

10. Deploy it on the staging environment

kubectl -n openfaas-stg-fn apply -f myfn-k8s.yaml

11. Test the function on staging, if everything looks good go to next step, if not go back to step 2

cat test.json | faas-cli invoke myfn -g https://openfaas-stg.exmaple.com

12. Promote the function to production

kubectl -n openfaas-prod-fn apply -f myfn-k8s.yaml

In a future post I will show how you can monitor your functions with Prometheus and Grafana and how to take that forward with a managed solution like Weave Cloud.

Do you have questions, comments or suggestions? Tweet to @openfaas.

Want to support our work? You can become a sponsor as an individual or a business via GitHub Sponsors with tiers to suit every budget and benefits for you in return. Check out our GitHub Sponsors Page